Sculpted Interaction: a Design-First Approach to AI Alignment

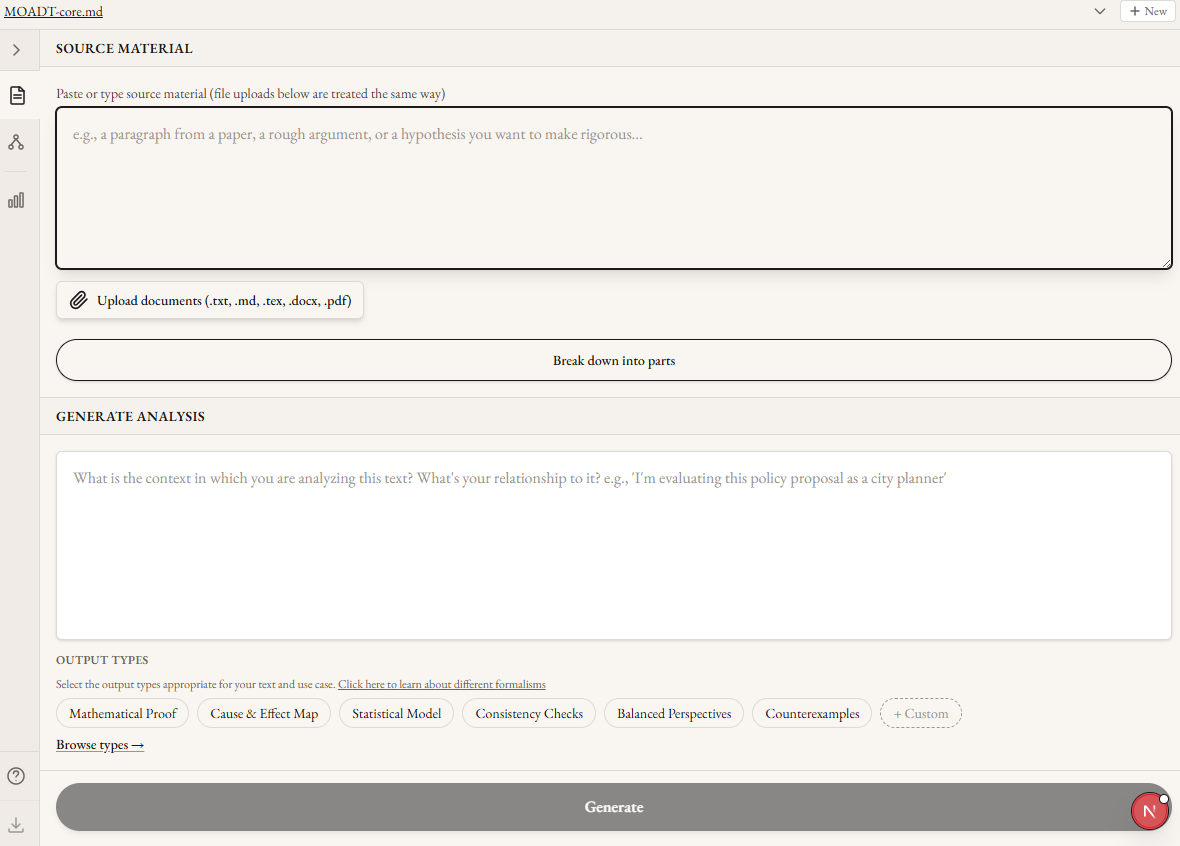

Acknowledgments: Thanks to Aditya Adiga for leading this project and trusting his ideas to me. Thanks to Matt Farr for comments on this draft. Thanks to Kuil Schoneveld for organizing the project. And thanks to the several friends who tested the MFC. This work was done as part of Groundless’ Autostructures project in the AI Safety Camp.In most security systems the biggest vulnerability is the people involved. A secure password doesn’t help if you leave your computer unlocked in public. Encryption in transit doesn’t protect a file if the key is attached to the same e-mail.The same logic applies to AI alignment. The best theories of human value won’t help a user who chooses (or is pushed) to surround themself with sycophancy and addictive slop. Alignment techniques that work on paper, applied through interfaces that systematically undermine human judgment, will produce bad outcomes regardless of the underlying model’s values. A core requirement of building healthy relationships between humans and AI is therefore the shape each interaction takes. And the standard chatbot format does not meaningfully constrain or scaffold positive interactions.This isn’t a novel observation, but it’s one that has seen limited engineering attention from major labs or the safety community (with some exceptions). Most safety-oriented AI work involves either the AI model directly (alignment and interpretability work) or regulating the deployment of new models (liability frameworks, AI pauses). Even IDE-integration interfaces like Cursor or Claude Code are thin wrappers around chat interfaces, leaving users the task of decomposing their intent into commands. This ignores an important direction for improving AI outcomes: building tools and interfaces that make good use of AI structurally easier than bad use. Not behavioral nudges, but lower level architectural choices that bring meaningful engagement to the forefront and remove the opportunity to stop thinking about your work.If we want to ha