Toward a Better Evaluations Ecosystem

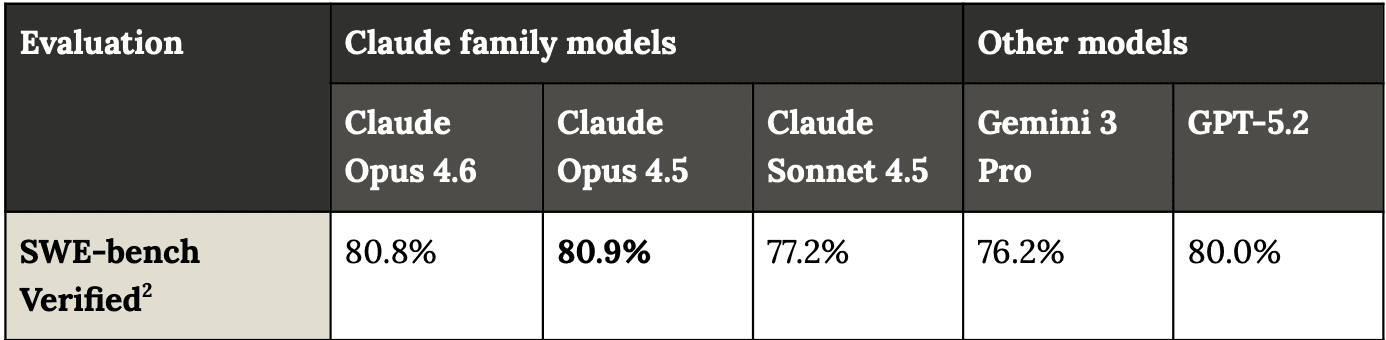

Model evaluations are broken. Numbers that are often cited alongside one another as evidence of progress are rarely comparable due to inconsistent methodologies, and AI companies run and report internal evals that are unavailable to the wider community. But we can fix this.We are making deployment and safety decisions based on numbers that do not mean what people think they mean. Every other high-stakes industry has solved this the same way, by taking the measurements out of the hands of the companies being measured, shifting this to third-party auditors.Capability and safety benchmarks are important for informing release decisions, risk reports and safety frameworks. They show when models are reliable enough to produce quality work or scientific contributions. Benchmark results are also an important tool for tracking progress over time on a fixed dataset. Most people do not read system cards or the precise methodology that was used to calculate the headline statistic. They see a number, compare it to the last number, and post about it as definitive evidence we’re one step closer to AGI. The problem is that the numbers they are comparing were often produced under fundamentally different conditions.The ProblemI realized the extent of these differences when I wrote OpenAI's blog post detailing significant issues with SWE-bench Verified, where we determined it was no longer a meaningful signal for software development capabilities.Anthropic has made changes to the methodology in nearly every release since Claude 3.7. For that model, Sonnet was evaluated on 489 of the 500 tasks in the dataset and given three tools: a bash tool, a file editing tool and a planning tool. For Claude 4, Anthropic ran the entire dataset and eliminated the planning tool and turned off reasoning, and the same setup seems to have carried over to Opus 4.5, which was averaged over 5 trials. Then with Opus 4.6, Anthropic enabled reasoning and the results are averaged over 25 trials. For Mythos and