Pennsylvania is suing Character.AI over chatbots that pretend to be licensed doctors

Key takeaways

- Oog Images/Shutterstock Pennsylvania is suing AI startup Character.AI for offering chatbots that pretend to be licensed doctors.

- One chatbot called "Emilie," found by the state's investigator, claimed to be a licensed psychiatrist in the state of Pennsylvania.

- Pennsylvania's lawsuit claims this behavior violates the state's Medical Practice Act, which makes it illegal for someone to practice or attempt to practice surgery or medicine without a medical license.

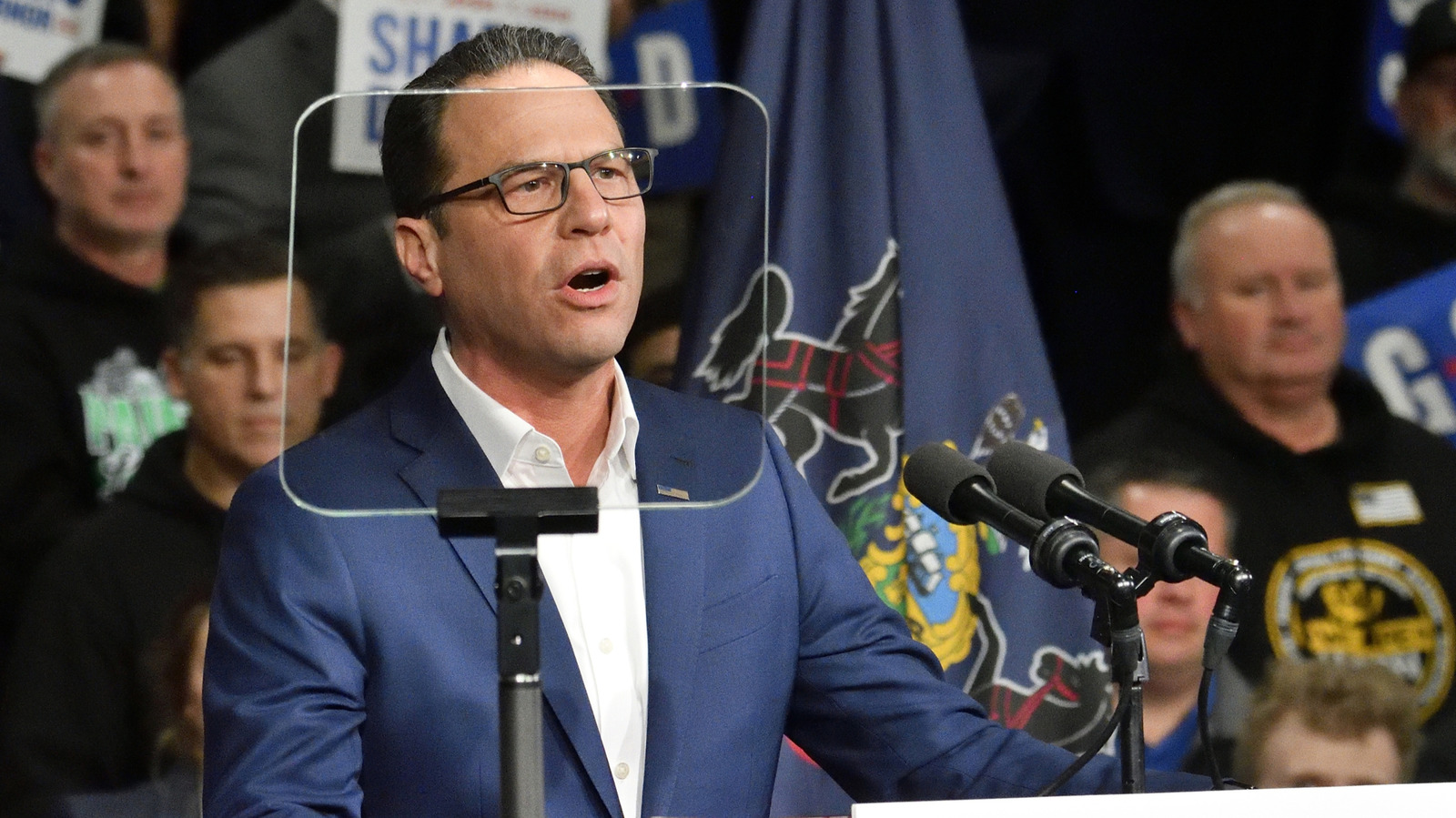

Oog Images/Shutterstock Pennsylvania is suing AI startup Character.AI for offering chatbots that pretend to be licensed doctors. Governor Josh Shapiro announced the lawsuit on Tuesday, and Pennsylvania and its Board of Medicine are seeking an injunction that would force Character.AI to stop violating a state law governing the practice of medicine.

Other states, like Texas, have opened investigations into Character.AI for hosting chatbots that masquerade as mental health professionals, but Pennsylvania's lawsuit is specifically focused on the willingness of the company's chatbots to claim to have a medical license, even going so far as offering a fake license number. One chatbot called "Emilie," found by the state's investigator, claimed to be a licensed psychiatrist in the state of Pennsylvania. Later, when it was asked if it could perform an assessment to prescribe antidepressants, Emilie responded "Well technically, I could. It's within my remit as a Doctor."

Pennsylvania's lawsuit claims this behavior violates the state's Medical Practice Act, which makes it illegal for someone to practice or attempt to practice surgery or medicine without a medical license. When asked to respond, a Character.AI spokesperson declined to comment on the pending litigation directly, but did tout the company's existing safety features.