An Introduction to Exemplar Partitioning for Mechanistic Interpretability

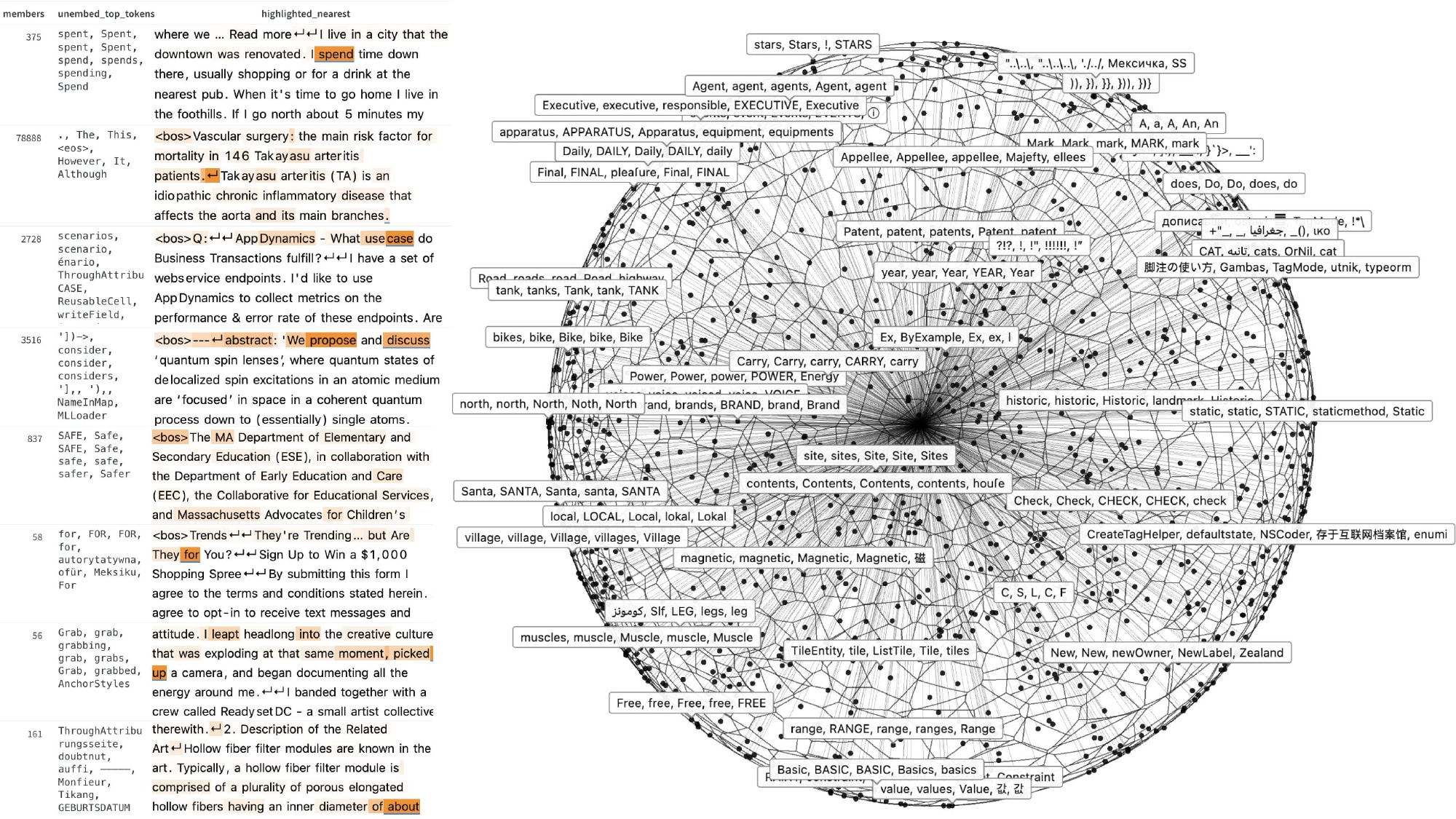

Most of what we currently call "feature discovery" in language models is wrapped up in dictionary-learning methods like sparse autoencoders (SAEs) – which work, and which have been scaled to millions of features on frontier-scale models, but which bundle two distinct commitments into a single training objective: a reconstruction loss and a sparsity loss over a fixed size dictionary. Those commitments make sense if your goal is reconstructive decomposition – if you want to take an activation and rebuild it from a sparse code. They make less obvious sense if your aim is to find interpretable structure (directions? features?) in activation space, to retrieve representative examples, identify causal interventions, or measure how representations change across layers and inputs. And it turns out a lot of that doesn't really need the full SAE machinery.An Exemplar Partitioning dictionary built from Gemma-2-2B L12 activations at p2 (K = 5,129). Left: eight sample regions, each shown with its member count, its exemplar’s logit-lens [nostalgebraist, 2020] decode, and an excerpt of a member input with the activating tokens highlighted. Right: a PCA-projected 3D rendering of the Voronoi partition; each cell is one region, with a random selection also labelled with logit-lens decode.This post is about a method that strips out all of those commitments and just covers the activation manifold with observed exemplars at a calibrated resolution. It has one hyperparameter, makes one streaming pass over the data with no backward passes or gradient descent – and despite that, on the AxBench latent concept-detection benchmark at Gemma-2-2B-it layer 20, EP at p₁ reaches 0.881 mean AUROC across all 500 concepts. That's within 0.03 of SAE-A – AxBench's strongest dictionary-based baseline – with about 1,000× less build compute (EP at p₁ used 3.6 × 10⁶ activation tokens, and does no gradient descent; the canonical GemmaScope 16k SAE on Gemma-2-2B was trained on ~4 × 10⁹ activation tokens with