Reinforcement learning scaling might incentivise hidden reasoning architectures for AI

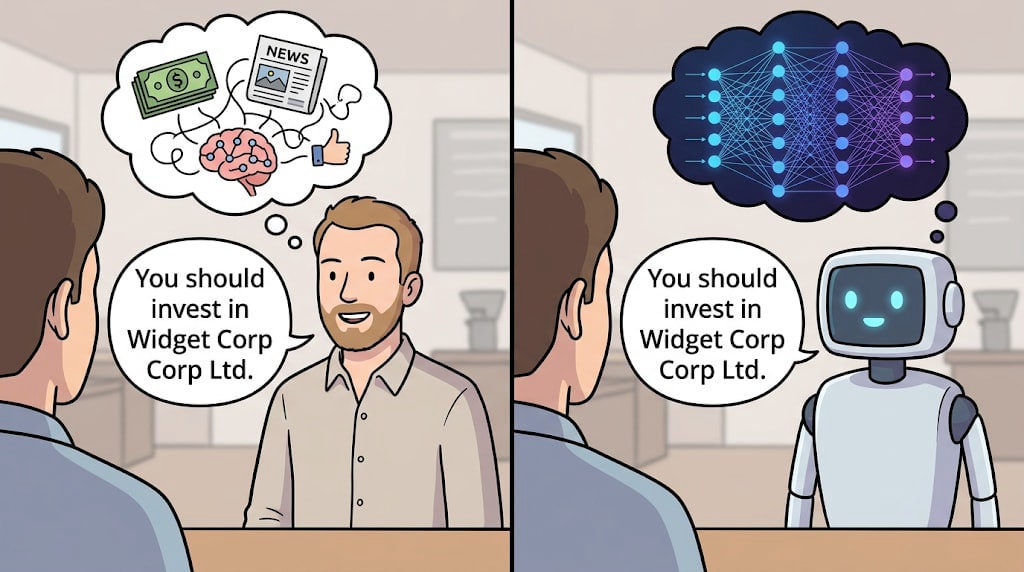

In short: the transformer architecture brought massive scale to AI, and also provided partial guarantees of ‘reasoning out loud’, an unprecedentedly interpretable situation for AI. Reinforcement learning (RL) may be less compatible with the transformer architecture, and RL is being scaled up at the frontier of AI. So we might see the end of the ‘reasoning out loud’ era for AI.This is part 2 of a series about LLM architecture and some implications for reasoning and transparency. Part 1 explained how we got where we are. Here, we look at where we might be going soon — does visible reasoning go away?Hidden reasoningThere’s a term that’s caught on somewhat to describe deep learning: inscrutable. Deep learning centrally relies on (large) neural networks, whose workings are famously impenetrable to those training them or operating them alike. I won’t expand much on that here.[1]The key thing is: a neural network takes inputs, converts them into an opaque and idiosyncratic internal language, then computes outputs. The arcane bit in the middle goes by many names: deep embeddings, neuralese, activation space, latent space. I’ll call it hidden reasoning.Humans do this too, of course! You can’t tell what someone’s thinking or planning unless they tell you and they’re honest about it. (And apart from the surface thoughts we’re aware of, we don’t even know most of what’s going on in our own subconscious.)Honest, earnest, helpful? Who knows? (Image gen using gemini.google.com)A dash (em-dash?) of luck: ‘thinking out loud’Hidden reasoning is concerning. Worst case, we have scheming AIs. Best case, it’s hard to know how much to trust the outputs — not because you think the AI is deceiving you, but because you just don’t know what it’s taking account of, and how, in its decisions.But we got quite lucky for a spell! Scaling language models turned out to be the easiest path to general purpose reasoning AI, and not only that, but the famed ‘attention is all you need’ transformer archit